Our Terms & Conditions | Our Privacy Policy

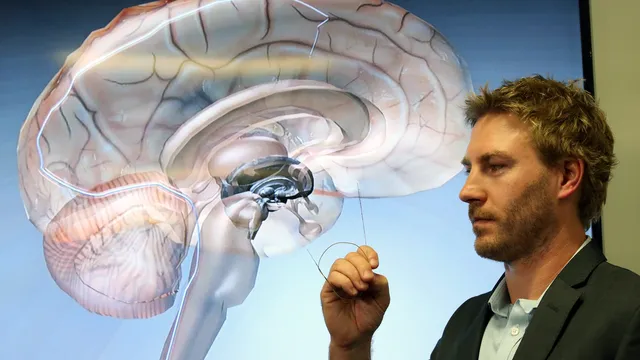

Scientists have created a brain implant that reads our thoughts

For the first time, scientists have managed to translate silent thoughts in real time thanks to a brain implant connected to artificial intelligence.

This technology will enable a new form of communication for paralyzed people, but it raises many important questions about privacy, consent, and mental health, according to BGNES.

This is аn ability that we thought was reserved only for some fictional superheroes: reading minds. But while direct neural interfaces (or brain-machine interfaces, BMIs) have been advancing by leaps and bounds for several years now, this is already a reality. A study at Stanford University in the US has enabled the direct decoding of internal speech, i.e. what a person is thinking of saying, without gestures or sounds.

ICMs work by connecting a person’s nervous system to devices capable of interpreting their brain activity, allowing them to perform actions—such as using a computer or moving a prosthetic arm—with their thoughts alone. This gives people with disabilities the opportunity to regain some independence. Until now, researchers had been able to give a voice to people who cannot speak by capturing signals in the motor cortex of the brain as they tried to move their mouth, tongue, lips, and vocal cords. Now, researchers at Stanford can abandon any attempts at physical speech.

“If we can decode [the internal language, ed.], it would save us physical effort,” Stanford neuroscientist Erin Kunz, lead author of the new study, told The New York Times. “It would be less tiring, and users could use the system for longer,” he said.

These findings, which were announced by the journal Cell, could allow people who cannot speak to communicate even more easily. The system already shows 74% accuracy in real time, which is an unprecedented achievement for this type of technology. But decoding the inner voice is not without risks. During testing, the implant sometimes picked up unexpected signals, which required the introduction of a mental password to protect certain thoughts.

During testing, the implant sometimes picked up unexpected signals, which required the introduction of a mental password to protect certain thoughts. “For the first time, we are able to understand what brain activity looks like when you are simply thinking about speaking,” explained Erin Kunz, adding:

Thanks to multi-unit recordings from four participants, we found that inner speech is strongly represented in the motor cortex and that imagined sentences can be decoded in real time.

To achieve this result, the research team implanted microelectrodes in the motor cortex (the part of the brain responsible for speech, ed.) to record neural signals. The study participants had severe paralysis caused by amyotrophic lateral sclerosis (ALS, also known as Charcot’s disease) or stroke. The researchers asked them to try to speak or imagine saying a series of words. Both actions activated overlapping areas of the brain and produced similar types of brain activity.

Artificial intelligence (AI) models were then trained to recognize phonemes—the basic units of language—and translate these signals into words and then sentences that the participants were thinking but not saying aloud. During a demonstration, the brain chip was able to translate the imaginary sentences with 74% accuracy.

Frank Willett, assistant professor of neurosurgery at Stanford, told the FT that the decoding is reliable enough to prove that with improvements in implanted equipment and recognition software “future systems could restore smooth, fast, and comfortable speech through internal speech alone.” But this exciting progress is accompanied by privacy concerns. The study actually revealed that ICM can capture internal words that participants were not asked to imagine saying, raising the question of personal thoughts being leaked against the user’s will.

“This means that the line between private and public thoughts may be more blurred than we assume,” warns Prof. Nita Farahani, a specialist in the impact of new technologies on society, law, and ethics. “The more we advance in this area of research, the more transparent our brains become, and we must acknowledge that this era of brain transparency is truly unprecedented.” “The more we advance in this field of research, the more transparent our brains become, and we must admit that this era of brain transparency is truly a new frontier for us,” she added.

This permeability between volitional and intimate thoughts fuels the fear of unwanted mind reading. The issue is no longer just medical, but also social! How can we ensure that every person’s mind remains an inviolable sanctuary? In order to protect privacy, Stanford researchers have devised a password protection system that prevents ICM from decoding the inner voice unless the user unlocks it. In the study, the phrase “chitty chitty bang bang” as a password prevented the unwanted decoding of personal thoughts with over 98% success. This technical solution reminds us that cognitive security is becoming a central issue, just like cybersecurity. Given the development of this password protection system, Cohen Marcus Lionel Brown, a bioethicist at the University of Wollongong, believes that “this study is a step in the right direction from an ethical point of view.” According to him, “it would give patients even more power to decide what information they want to share and when.”

In a previous interview with NPR in March 2024, Nita Farahani, author of Battle for Your Brain, mentions the rise of ICM and says the following about the brain: “It is our most sensitive organ. Opening it up to the rest of the world profoundly changes our humanity and our relationships with others.”

For her part, Evelina Fedorenko, a cognitive neuroscientist at MIT who was not involved in the study, also points out that much of human thinking is nonverbal. “What they record is largely fiction,” she says, referring to spontaneous and unstructured thinking.

Erin Kunz, the lead author of the study, herself argues that the current state of knowledge does not allow patients to have conversations using their inner voice. “These results are primarily proof of concept,” she says.

Enthusiasm and fantasies about such research should remain moderate. At this stage, the vocabulary remains limited, accuracy is far from perfect, and implantation remains invasive. The equipment requires extensive training and constant adjustments. Therefore, before widespread clinical use, it will be necessary to improve both the algorithms and the hardware interfaces and implantation conditions, which, according to the researchers, will take several more years.

However, these discoveries raise a heated debate. That of “neuro-rights,” a set of emerging rights aimed at protecting mental health from any interference. In other words, will our “mental security” have to be defined and protected within a few years? Because with the promise to give back a voice to those who have been deprived of it, this breakthrough also outlines the contours of a new future in which, even if desired, silences speak. I BGNES

Images are for reference only.Images and contents gathered automatic from google or 3rd party sources.All rights on the images and contents are with their legal original owners.

Comments are closed.